My little media lab began with a movie scanner that I bought to digitize my dad's treasures from the forties. I installed it in the lab on my boat, added a fast gaming PC that gave me a good excuse to buy an Oculus Rift, and started digitizing 8mm reels.

One afternoon after showing all this to a dock neighbor, I agreed to take on a batch of Super 8 movies from his childhood. I picked up an external USB hard drive to make sure I wouldn’t run out of space, and was in business!

7 years later, I am sitting in a mobile lab with 180 TB of network-attached storage (NAS), monitored by home assistant. It is shared by 5 desktops, each of which also has a local filesystem optimized for its particular specialties (slides, movies, video, etc). I maintain an inventory of thumb drives ranging from 16 to 512 gig, along with fast 1-2 terabyte SSDs for bigger jobs. And to let folks share with family even while their jobs are in progress, I sometimes pipe files to cloud services.

I never imagined that I would one day trundle into my dotage with hierarchical file systems of other peoples’ stuff in addition to my own daunting archives. This is not as sexy as the optical and magnetic contraptions at the other end of the digitizing workflow, but it is very much worth discussing… as these are now the “sources” from future perspective.

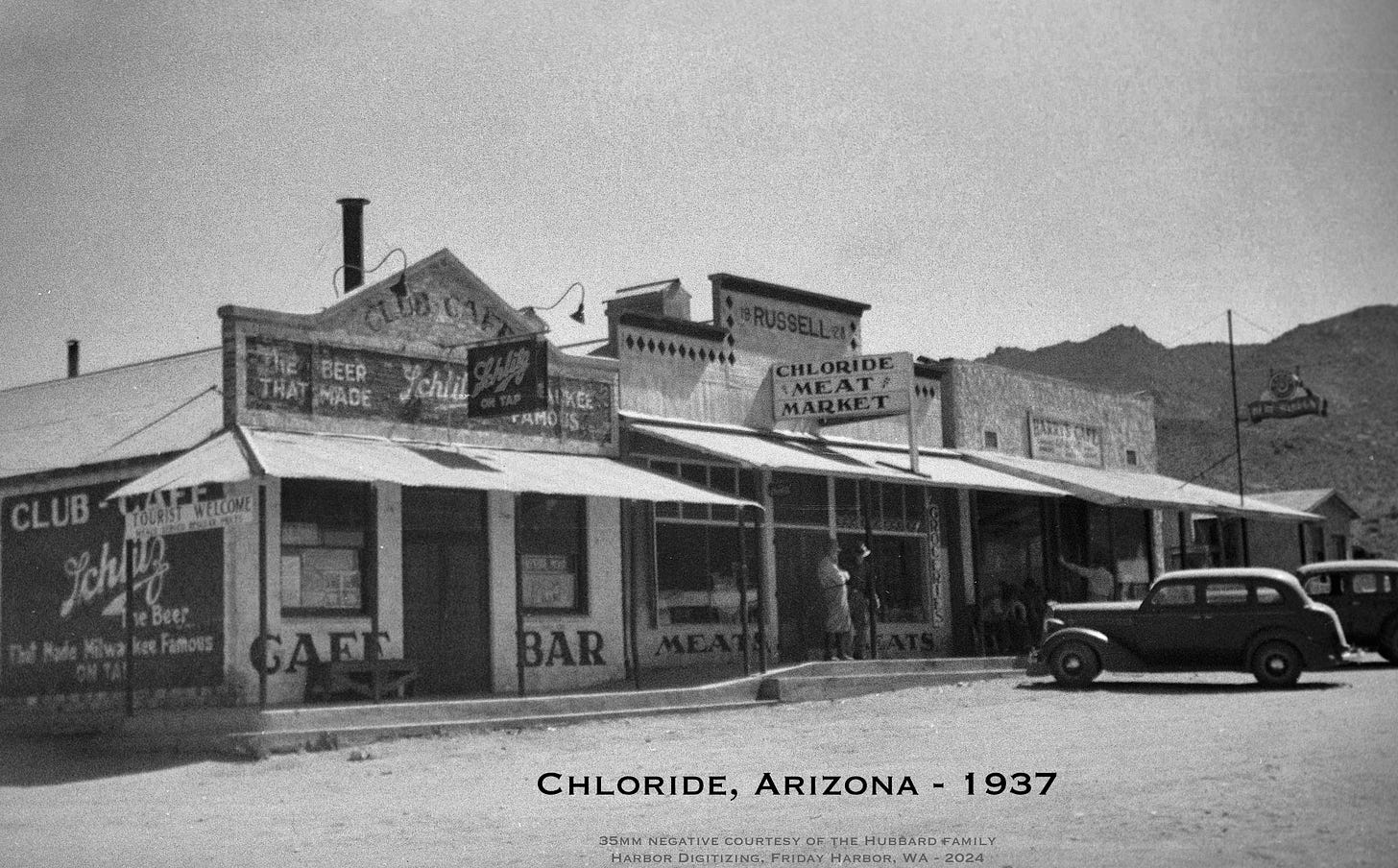

Imagine that someone just strolled in with a box of old negatives from the 1930s. What lands in my filesystem as the project is completed? There is more to this than scanning a folder of JPEGs.

The files fall into two broad categories…

1. Files we Want

We can learn a lot from the language of professional archivists, who have turned “digital asset management” into a science. There are three types of files we care about:

Preservation

These are the most accurate possible representations of the analog sources. Size is no object; we want to get as close as we can get to the originals, within the constraints of available tools. Think of preservation files as “archival quality for future generations.”

I did ten reels of challenging 16mm film last week with serious vinegar syndrome and wrinkled sections that split longitudinally… the originals will likely be tossed. My VOC monitor was through the roof, and I could taste acetic acid in my throat. These preservation files that I made have become the sources, regardless of quality losses incurred during the scans. This is a heavy responsibility, since the very nature of analog media is that the process is not perfect.

There is a history of heartbreaking failures along these lines. One common scenario is when people converted home movies to newfangled VHS back in the ‘70s… then threw away the originals. Videotape seemed like magic then, but we now know that it is awful, and even small shops like mine with prosumer scanners can get more out of film then those blurry VHS versions, even with simple title screens and period music. Just being able to extract every film frame is a huge improvement over NTSC video, and it is always sad to hear that the originals are long gone. (Whenever this comes up, I digitize the tape, then tell the client I'll give a full refund if they can find the source reels.)

So here's the question: what future magic AI frame-interpolating inference-vision upscaler will make today’s methods look as antiquated to our descendants as those old VHS tapes do to us? This is why I always tell people to keep their sources, but some really want to get rid of musty tonnage. What are we losing in the process that will cause a <headdesk> response a quarter century from now?

Preservation files may not be convenient or even possible for most people to use; but they need to be as close as possible to perfection within the limits of our equipment budget and skills.

In the case of the box of old negatives mentioned above, these would be RAW files from the capture process, shot with mirrorless Sony A7iii on a Kaiser copy stand against a color-accurate backlight. They are 50 MB each, not conveniently viewable, and carry no edit history. But if done well, they have a huge dynamic range that is deeper than it appears.

Mezzanine

Second, and most familiar, we export those sources to mezzanine files that are as good as they can reasonably be, compromising quality and usability. These are the files that people can share with family, print, or otherwise enjoy. In the case of our negative example, they would be Lightroom-exported hi-res JPEGs incorporating my edits. The client can always go back to the RAW files and do it better… nothing about my editing is cast in stone. This is especially important with special images that need to be Photoshopped, giving much higher quality results than tweaking a JPEG that has already had its density range constrained, with compression artifacts added just below the level of visibility. Mezzanine files would be comparable to the ones in our smartphone cameras… decent images with a manageable file size in the 5 megabyte range on average.

Access

In addition, we often need convenient versions that are ephemeral, cheap, and small. These may be Facebook or textable shares compressed to about 10% of the keepers on your hard drive, quick phone videos, digital contact sheets for easy access to the scanned photo library, print publications, or other lossy versions. They are handy, but it would be as tragic as those VHS copies of old film if they were all we take away from an expensive digitizing process.

A lot of folks don't know that images shared in Facebook are hugely reduced. A 3.5 MB image may be about 160K if saved from a message, and while it looks great on the small screen, so much has been lost. I also have to be careful when sending video clips that they don't accidentally become the archives. A video of Grandma that is delightful to share on Facebook will look blurry on a computer ten years from now… if you can even recover it.

Preservation, Mezzanine, and Access - these mean different things in different media types, but the distinction should guide everything we digitize. The definitions can overlap considerably when the initial capture is what the client wants and there is no reason to conjure alternative versions… like making an MP3 from a non-critical audio cassette. A more critical tape may have a WAV, FLAC, or Audacity AUP project in addition to the exported MP3 or CD-ready format with ID3 tags and track markers. We are careful to do this with special ones, high-quality music, and some reel-to-reel sources (especially if the client intends to do some audio editing). But an old answering machine tape goes straight to MP3, making those three archival file types irrelevant.

Holding on to preservation files along with the rest is a critical responsibility to our descendants.

2. Files we Spawn

In addition to those three flavors of archive files, the digitizing processes themselves can leave cruft in their wake.

A good example is the movie scanner (which will get its own feature article soon, as a lot is happening in that space). This contraption is clever — it takes a photo of every film frame, right down to the grain, generating a large preservation file that is later processed to create an AVI mezzanine. We then export that to a much more convenient MP4 access file that looks identical on screen but is technically lossy with compression artifacts. For most practical purposes, the MP4 is fine… but I always suggest that people use the AVI for editing projects. Unfortunately, the actual FILM source file from the scanner is a pain, since it can only be used with Retroscan software (and they went bankrupt last month). But that is where we go for individual frames or re-exports with different file specs, so I keep all those in my archives, just in case. The result of all this is file management complexity:

Scan movie to FILM file in fast internal SSD

Backup FILM to NAS archive

Export to AVI

Export AVI to MP4

Copy AVI and MP4 files to client folder on NAS

Export to thumb drive

Delete duplicates (keeping FILM, AVI, & MP4)

Other media types leave clutter as well, depending on how you define that term. Going back to the negatives from the ‘30s, our scan creates RAW captures in the Lightroom internal library, backed up to NAS. But the moment I start editing to create cropped and white-balanced JPEG mezzanine files that actually look good, we also generate Lightroom catalog entries. This is the sole repository of those edits (along with the backup), though there is an option to put them into sidecar XMP files that could ride along with the RAW and JPEG folders.

Of course, our routine edits are rarely heroic… so instead of cluttering client thumb drives with those I just keep the full history in the huge catalog where I can fix something if needed. If a client wants to sharpen and colorize that rare image of Dad, then they can start from RAW and ignore my basic edits. This gets messier with negatives, with Negative Lab Pro software having special sauce that would be harder to replicate, and occasionally I do a deep dive into dust removal, ancient Ektachrome red correction, or perfectionist fine-tuning… but it hasn’t yet been necessary to export the detailed history of individual edits.

From a file management perspective, this gets more complicated when multiplied by multiple clients with multiple projects each. If you want to digitize your own collections and are horrified by all this, it’s OK… a simple hierarchy can reflect your personal organization system. But when you throw hundreds of strangers into the mix, it can make your head hurt… especially since the file-naming conventions of different people add meta-variables. (Do we auto-increment filenames through one client’s whole archive, or start over with each carousel… especially if their raw media is meticulously organized and not just a shoebox of miscellany? Those decisions become huge commitments that are extremely difficult to change.)

3. Files we Deliver

Finally, there are deliverables. I’ve already mentioned the thumb drives, which are pretty straightforward; after a few sloppy years of ad-hoc file management, I finally stabilized on a simple system that was obvious in retrospect. Every client has a folder on the NAS, and that contains whatever needed to logically reflect the stuff we have talked about, neatly organized and labeled.

I do add explanatory names for some things, as I can’t assume that it’s always obvious. A collection of digitized home movies is likely to have a couple of folders like this:

AVI - editing

MP4 - sharing

This helps convey the idea that the MP4 files are smaller and more convenient, and the AVI are more “serious.” I do a similar thing with the mountain of RAW files, which most folks will never use… but the law of preservation still applies.

That’s all pretty easy, but there is one other thing I want to mention here. Some projects can drag on for months, and folks can start to wonder what’s going on if they don’t hear from me for a long time. This is is one of the reasons for using a cloud service, which I originally did just to pass along teasers.

But there are good reasons for this, so now it is a routine — at least for photos. Every client gets a SmugMug gallery, and that’s where I park JPEG mezzanine photos as they get done. With permissions set to allow sharing (obfuscated URL, no password), the client can get pleasure out of the process right away… and share them with family.

These are full-size JPEGs, which clients can freely download (individual photos, or the whole gallery). SmugMug also provides a very good printing service, generating a few affiliate nickels on the rare occasions that happens.

One might argue that it is risky to deliver the product before getting paid, but problems like that are very rare here and it is worth the small risk in exchange for excellent goodwill… and the fun of the process. I highly recommend it.

On a smaller scale, I do this with film and video as well, though without a fiber connection that quickly becomes prohibitive. But in general, providing some goodies while the project is in progress makes everybody happier.

Tips, Tricks, and Techniques

Finally, I want to mention one of my favorite kinds of geek media. Back in the early seventies, when I was building my homebrew 8008 system and hanging out a shingle as a custom industrial-control system engineer, I savored a special class of articles in the trade journals and hobby rags.

Instead of long-form features, these were collections of working designs, tricks, techniques, shortcuts, brief how-to pieces, and nifty ideas. With column names like “Ideas for Design,” “Designer’s Casebook,” “Hints and Kinks,” and more, these were treasures… often published as compilations that I had on my bookshelves for decades. My first published article, in fact, was one of these - a short piece in Electronics magazine about interfacing a teletype with a microprocessor… speaking Baudot! (I got $50 for it in 1974.)

I mention this non-sequitur because I want to recommend my favorite of this genre, very much alive 50 years later…. and right here on Substack! Gar’s Tips & Tools is a treasure trove of things that will make your shop or lab hum, including clever ideas about familiar tools, fabrication tricks, amazing uses for adhesives, leveling-up techniques, and ways to fine-tune your fabrication processes. He has put out 176 issues so far, and now that I have written <cough>four</cough> I am suitably impressed. It’s an ongoing geek gold mine, and is the descendant of the Tips & Tales from the Workshop book he wrote while editorial director of Make: magazine. Now we can enjoy weekly-ish posts with the same playfully brilliant flavor.

I first encountered Gareth in 1991 when he interviewed me for Futurist, Mondo 2000, Wired, and others… then a decade later we worked on a 4-part series in Make: about the mobile lab that is now parked next to my big one. His sparkly brain has helped shape the community of nerdly makers for decades, and his newsletter is one of the few that I immediately read from beginning to end… with lots of “oh cool, gotta remember that!” moments. Recommended.

News from the Lab

The filesystem tour was more elaborate than I imagined, so some of the geeky digitizing news bits will have to wait. A lot is going on, including an experimental work-around for a maddening quirk in the Hyperdeck Studio Mini… I’m piping multiple HDMI and SDI feeds from the console to the studio desk, with fiendish plans to take advantage of ISO capability. This will all make sense shortly…

Fun to catch up with Steve (I'm a new subscriber), as I first encountered him in the early 1970s when I edited the short-lived Bike World magazine (sold to Rodale as part of a divorce settlement by the Bike World and Runner's World founder). I can relate to the file-storage labyrinth, though on a much smaller scale. At 82, I'm engaged in cleaning-up transcripts from audio recorded on cheap Radio Shack decks 20 to 40 years ago. Oh yes, digital degradation is something I live with daily - and modern tools like Adobe Voice Enhance, et al., can't do a lot to help.

Thanks so much for the shout-out, Steve. It's been fun traversing the ever-evolving technoverse with you for all these years.